TL;DR: Anthropic’s new Claude Managed Agents turn “agents” from a UI gimmick into a hosted runtime: you define agents, tools, environments and rubrics; Anthropic runs long‑lived sessions in their containers so your product teams don’t have to wire up orchestration from scratch. In four launch videos – the deep dive on “What is Claude Managed Agents?”, the Cowork bumper, the 59‑second teaser, and Notion’s “How Notion built with Claude Managed Agents” story – Anthropic quietly sketches a DX that sits between OpenAI’s Agents SDK (powerful, but you own all the infra) and Google’s Gemini for Workspace (smooth in‑suite assistants, weak generic agent runtime). The upside: proper primitives for agents, sessions, environments, tools/MCP, memory and multi‑agent co‑ordination; the downside: infra lock‑in, long‑running billing risk, and a safety/governance story that still lives more in docs and contracts than in the glossy launch clips. If you’re deciding where to build your next generation of AI workflows, this piece argues that you must treat “who runs the runtime?” as the first design decision, not an implementation detail.

Agents aren’t a “nice to have” feature any more. They’re a runtime decision. And the Claude launch videos make it very clear which side of that decision Anthropic is on.

If you build, ship or market AI products for a living, the Claude Managed Agents + Cowork launch isn’t just “another AI drop”. It’s Anthropic’s clearest statement yet about how they think agents should feel for developers – and how much of the boring, brittle infrastructure they believe they can abstract away.claude

Across four separate videos:

Why these Claude videos matter

- “What is Claude Managed Agents?” – the deep product explainer.mcpmarket

- “Cowork is now generally available” – the 33‑second brand bumper.reddit

- “Introducing Claude Managed Agents” (59s) – the tag‑line teaser.claude

- “How Notion built with Claude Managed Agents” – the flagship partner story.youtube+1

Anthropic is quietly pitching a very opinionated developer experience:

“Stop wiring up your own orchestration layer. Define agents, tools and outcomes, and we’ll host the messy, long‑running, multi‑tool workflows in our cloud.”claude+1

If you’re comparing this to OpenAI’s agents / SDK or Google’s Gemini for Workspace / Apps Script, the real question isn’t “who has the smartest model?” but:

- Who actually removes friction for devs and PMs building production agents?

- Who’s honest about the limits of autonomy, safety and cost?

This piece unpacks what Anthropic is really selling from a developer‑experience lens, where it falls short, and how it stacks up against competitors – with a clear section on limitations that the videos skip over.

Anthropic’s DX thesis in one line

The “What is Claude Managed Agents?” explainer is the only video that respects developers enough to show real architecture.mcpmarket

It introduces a vocabulary that’s clearly meant to be the mental model for teams:

- Agents – workers, graders, co‑ordinators, each with tools and a persona.mcpmarket

- Sessions – long‑running jobs kicked off from your app, with an event stream back to your UI.mcpmarket

- Environments – isolated containers with full file system, Bash, web access and your own packages.platform.claude+1

- Tools & MCP – local tools (Lighthouse, Puppeteer, Python) plus remote SaaS tools via Model Context Protocol servers.kareemf+1

- Memory – a long‑term store of past reports/incidents that agents can read and write.claude+1

- Outcomes & graders – explicit rubrics (for example “Lighthouse > 90; no render‑blocking resources”) and grading agents that push the worker to retry until it meets the bar.mcpmarket

- Multi‑agent co‑ordination – specialist agents plus an orchestrating agent sharing a file system but not a context window.the-ai-corner+1

From a DX point of view, this is Anthropic saying:

- You don’t own the orchestration infra. Anthropic will run the containers, manage lifetimes, handle logs and streams.claude+1

- You do own the semantics. You define tools, rubrics, environments, memory schemas, and how sessions are triggered inside your product.mcpmarket

That’s a clear separation of concerns – and honestly refreshing after a year of vague “agent” talk.

But it also means Claude Managed Agents are not “no‑code magic”. They’re infrastructure‑as‑a‑service for serious teams. You trade maximum control for a simpler path to “we actually have this in production.”

Three demos, three different DX promises

The explainer runs through three tightly scripted scenarios. Each one telegraphs a different kind of developer experience.mcpmarket

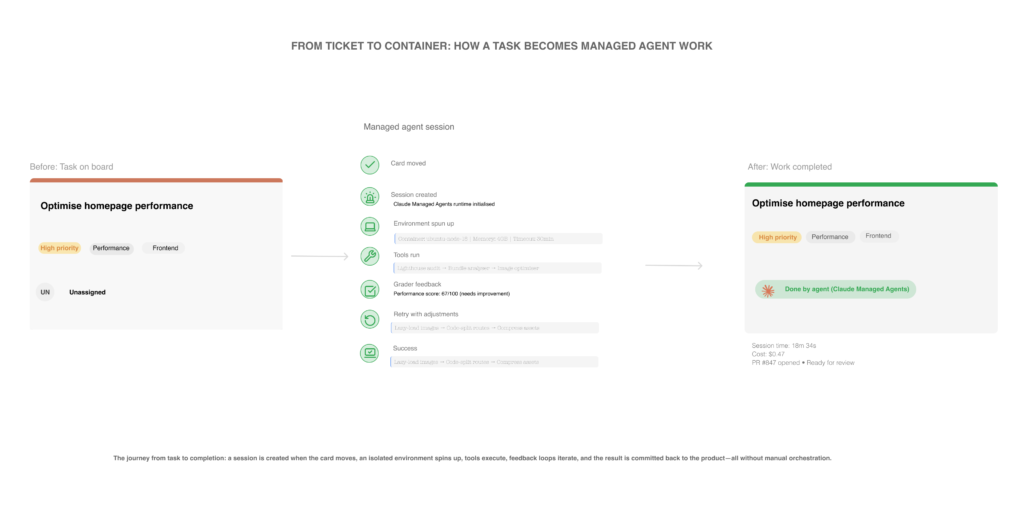

Web performance agent – DevOps / platform teams

Scenario in the video

- Trigger: move a Kanban card (“optimise website performance”) into In Progress – that fires a session.mcpmarket

- Under the hood:

- A managed agent runs in an environment that has the repo mounted plus Lighthouse + Puppeteer installed.mcpmarket

- The agent runs audits, edits code (compress images, defer scripts), then runs audits again.mcpmarket

- A grader agent checks the result against a rubric (score above 90, no render‑blocking resources, lazy‑loaded images) and rejects the first attempt.mcpmarket

- Claude reads that feedback, retries, and pushes the Lighthouse score to 96, streaming each step back to the task board via an event stream.mcpmarket

Developer‑experience takeaway

- Anthropic is willing to give your agent real power – file‑system access, code edits, external web – inside a hosted container.mcpmarket

- You configure the environment and the definition of “done”; Anthropic manages the life cycle, retries, and streaming.mcpmarket

Compared with OpenAI’s Agents SDK, where you typically orchestrate tools and external infra yourself from your backend, Anthropic is aiming at “we’ll host your sidecars, not just your prompts”. It’s closer to Heroku for agents than “just another API endpoint”.youtube

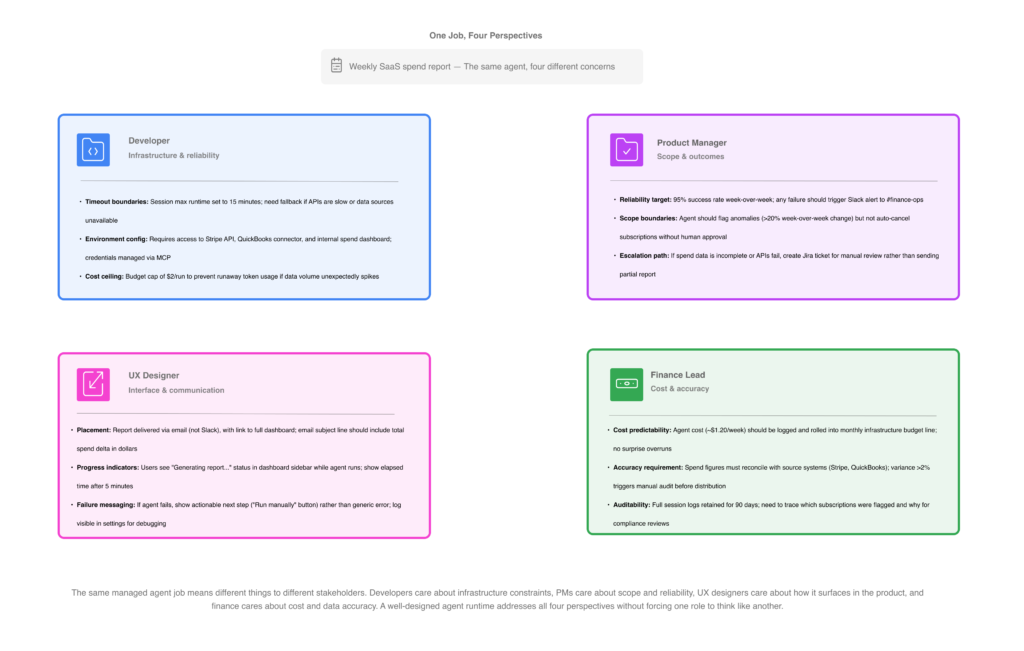

SaaS price & cost report agent – ops / finance‑adjacent

Scenario in the video

- Job: track pricing and plan changes across your SaaS stack and generate a ready‑to‑use report before stand‑up.mcpmarket

- Tools:

- Web search for each vendor’s pricing page.

- A Python analysis task in the environment’s sandbox.

- An Excel spreadsheet MCP skill for manipulating a shared sheet.mcpmarket

- Integrations:

- Write an executive‑friendly summary.

- Post to Slack.

- Create tasks in Asana via MCP servers.mcpmarket

- Memory: read last week’s report and write this week’s back, so the agent talks in deltas, not stateless strings.claude+1

Developer‑experience takeaway

Anthropic wants you to think in jobs, not prompts:

- “Run this weekly job, update this sheet, post in this channel,” rather than “call this model with a huge prompt”.

- You don’t wire up your own scheduler, container runner, or secrets store – but you do own schemas, tools, authorisation flows and expectations.claude+1

Compared to Gemini for Workspace, where the pitch is “we’ll sit in Gmail, Docs and Sheets and quietly help you write,” Anthropic is saying:youtube+1

We’ll be the job runner behind your ops stack – you surface the results where you like.

That’s more developer‑centric, but also a heavier lift.

Incident‑response agents – SRE and platform teams

Scenario in the video

- Trigger: the monitoring stack fires an alert; a custom backend tool pipes the payload into a new session.mcpmarket

- Architecture:

- One co‑ordinator agent.

- Three specialist agents, each with its own context window but a shared file system.mcpmarket

- Flow:

- Specialists analyse their slices; the co‑ordinator synthesises an incident summary and proposed remediation.mcpmarket

- Before posting to Slack, a permissions policy forces human approval.mcpmarket

- Memory lets the co‑ordinator recognise recurring issues (such as a DNS TTL misconfiguration) and reuse that pattern next time instead of starting from scratch.mcpmarket

Developer‑experience takeaway

- Anthropic is one of the few vendors making multi‑agent orchestration a first‑class primitive instead of “you do this yourself in app code”.the-ai-corner+1

- The human‑approval gate is a DX feature: it gives you a default answer to “how do we stop an agent from embarrassing us in Slack?”.mcpmarket

Squint and you can see the same patterns that OpenAI‑side tutorials walk you through (co‑ordinator/worker, guardrails, tracing), but baked into the platform instead of your repo. For teams who don’t want to maintain their own agent orchestrator, that’s a meaningful win.youtube+1

3. The Notion case study: when DX hits real product constraints

The Notion x Claude Managed Agents video is where this architecture shows up in an actual SaaS product team’s hands.youtube+1

Notion’s message:

- “We want Notion to be the agent orchestration platform where you live.”linkedin

- You can drag 30 tasks into a “Claude” column, go for a snack, and come back to prototypes or onboarding workflows generated for you.youtube+1

From a developer‑experience angle, two things matter.

3.1 Task‑board‑first integration

Notion’s devs don’t show prompts. They show project boards and custom agents.youtube+1

- Moving a task is effectively starting a managed‑agent session under the hood.youtubemcpmarket

- The UI shows a sidebar of agent threads kicking off in parallel, a nice visualisation of Anthropic’s session + event stream model.youtube

For product teams, this is the pattern to steal:

- Agents as participants in existing workflows (boards, tasks, docs), not a separate chat box bolted to the side.

3.2 Infra offload vs product responsibility

The Notion PM spells out the trade‑off:

- Doing this in‑house would have been a “mega brain engineering effort” – orchestration, containers, tools, evaluation.youtube

- With managed agents, Notion focuses on:

- How tasks are structured.

- What data agents can see (databases, docs, boards).

- How humans review, iterate and approve outputs in the UI.linkedin+1youtube

That’s what Anthropic’s DX pitch looks like in reality:

- Infra offloaded. Semantics, UX and governance firmly your problem.

Compared to OpenAI’s more “bring‑your‑own‑orchestration” world, this is more constrained but far quicker to production – if you can live with Anthropic’s patterns.

4. Cowork: when the DX story disappears into “Hey”

The Cowork GA video is the opposite of the explainer. It’s 33 seconds of visuals, music, and variations on “Hey”, plus one caption:

“Built for how teams work — across the tools they already use, with the controls enterprises need.”reddit

For developers, that tells you almost nothing.

But if you read the Cowork product page and docs alongside it, a picture emerges:anthropic+1

- Cowork is an agentic desktop / workspace layer on top of the same managed‑agent primitives.

- It gives non‑developers a way to:

- Run autonomous tasks and workflows (including multi‑step, parallel work).

- Pull from local files, browsers and SaaS tools.

- Queue, inspect and review outputs.datacamp+1

In other words:

- Managed Agents = infra for agents.

- Cowork = Anthropic’s opinionated client for those agents.claude+1

That’s much closer to Google’s Gemini for Workspace story (“we own the UX, we own the suite”) than OpenAI’s mostly API‑plus‑chat posture.youtube+1

For DX, the implication is:

- You’ll coexist with Cowork even if you never integrate with it.

- Your agents might be running in the same underlying infra as the ones Cowork exposes, whether or not your users ever see the Cowork UI.support.claude+1

The video doesn’t explain this. It’s pure brand, while the dev reality lives in docs and the managed‑agents explainer. If you care about developers, you need both.

5. The limitations these videos gloss over

Let’s get to the uncomfortable bit. The power on display is real – but so are the constraints. If you’re thinking of building on Claude Managed Agents, you need to be clear on these.

5.1 You inherit Anthropic’s infra assumptions

You get:

- Isolated containers with full file system + web access, plus your chosen tools.anthropic+2

- Tool calls, MCP servers, event streams, memory, grading agents.claude+1

You don’t get:

- Fine‑grained control over scheduling, autoscaling, or multi‑region failover from the marketing materials; those knobs live inside Anthropic’s platform.modemguides+2

- Transparent detail in the videos on secrets handling, package supply‑chain risks, or outbound network policies – the boring but important bits.anthropic+1

If you work in a regulated or risk‑sensitive environment, that’s not a footnote. You’ll have to dig into documentation and contracts to answer:

- Can these environments ever see production secrets?

- How are outbound calls limited and logged?

5.2 Long‑running agents = long‑running bills

Anthropic leans hard on agents that can run for 20–60 minutes or more.anthropic+2

That’s exactly what many teams have been waiting for after the stateless‑chat era. It is also:

- Operationally non‑trivial.

- Financially dangerous if you don’t put hard limits in place.lowcode+2

Questions you’ll need answers to:

- What are the timeout and cancellation semantics?

- How do you set per‑session cost ceilings so a mis‑configured rubric can’t quietly burn through your budget?modemguides+1

Independent reviews and tests are already flagging these concerns: developers love the power, but are wary of runaway costs for poorly specified tasks.youtubelowcode+1

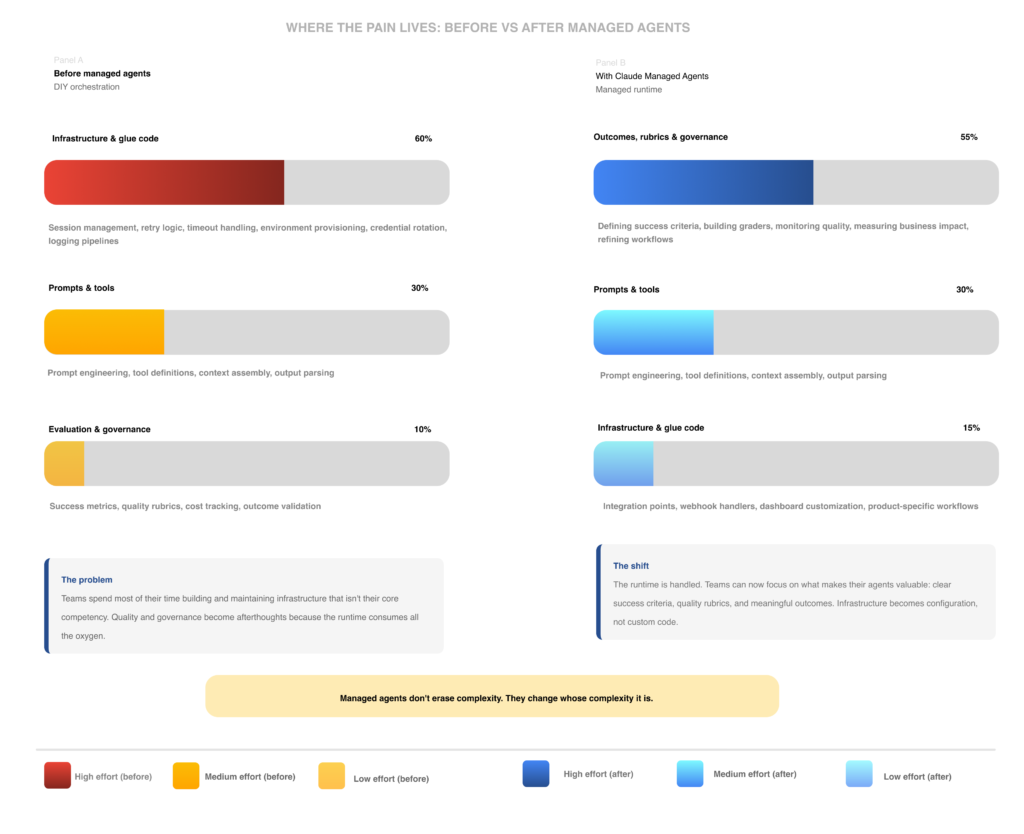

5.3 Complexity didn’t go away – it moved

Anthropic’s line is essentially:

“We take care of the infra; you just define your agent’s objectives, tools and parameters.”reddit+1

Reality, even in their own examples, looks more like:

- You design:

- Rubrics and graders that reflect business reality.the-ai-corner+1

- Environments that mirror enough of production without being dangerous.anthropic+1

- Memory schemas that don’t leak sensitive context between unrelated tasks.aimaker.substack+1

- You govern:

- Who can define or modify agents.

- How often they’re evaluated.

- Where logs, artefacts and failures are visible.onereach+1

The complexity has shifted from “how do we run this agent?” to “how do we specify and govern this agent?”. That’s a win, but not a free lunch.

5.4 Safety narrative is still thin in the marketing

To Anthropic’s credit, the explainer shows a few safety‑adjacent behaviours:

- A grader that rejects an initial attempt, forcing Claude to improve.mcpmarket

- A human approval gate before an incident summary is posted to Slack.mcpmarket

Given the level of autonomy on offer, it’s notable what’s missing in the videos:

- Concrete sandbox guarantees – what exactly can an environment touch or not touch?

- Clear data‑residency and tenant‑isolation claims, especially when repos or shared drives are mounted.modemguides+1

- Any treatment of adversarial tool outputs or hostile web content – not just hallucinations.kingy+1

Anthropic talks more about safety and evaluation in its written materials, but the launch videos downplay it. If you’re the one on the hook when an agent goes sideways, you’ll need to pull that story out yourself.kingy+2

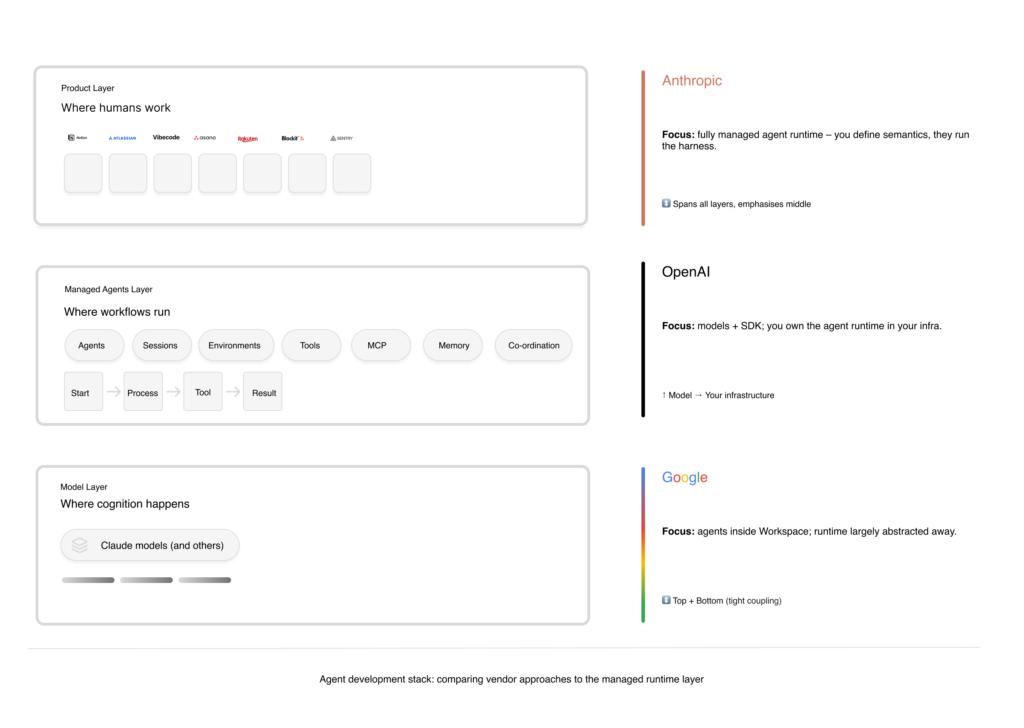

6. Anthropic vs OpenAI vs Google – a DX‑only comparison

You wanted a comparison explicitly focused on developer experience, not model quality or branding. Here it is.

6.1 Anthropic vs OpenAI Agents / SDK

OpenAI’s stance – from public talks and agent tutorials:

- Ship an API and SDK so you build and run agents inside your own infra.kaggleyoutube+1

- You orchestrate tools, memory, long‑running behaviour (via workers/queues/cron), and your own security perimeter.

Anthropic’s stance – from these four videos and their blog:

- Provide a fully managed agent runtime: sessions, containers, tools, memory, event streams, co‑ordination.anthropic+2

- You call into that runtime from your product; you don’t run your own agent orchestrator (unless you want to).claude

DX trade‑offs

| Dimension | Claude Managed Agents (Anthropic) | Agents / SDK (OpenAI) |

|---|---|---|

| Where agents run | In Anthropic’s cloud, in their managed containers.mcpmarket+1 | In your infra; you call OpenAI models from your orchestrator.youtube |

| Who owns orchestration code | Anthropic – sessions, retries, event streams, multi‑agent patterns are built‑in.mcpmarket+1 | You – you write the orchestration layer using SDKs and frameworks.youtube+1 |

| Control over environment | Via their Environment abstraction: strong but opinionated.mcpmarket+1 | Full control over runtime, packages, networks – but you own reliability.kaggle |

| Speed to a realistic POC | Potentially faster; you define agents, tools, environments, outcomes and start sessions.mcpmarketyoutube | Slower; you typically need a worker or job system before production.youtube |

| Long‑term portability | Lower; you’re coupled to Anthropic’s infra and semantics.claude+1 | Higher; in theory you can swap model vendors and keep orchestration.kaggleyoutube |

If you’re a smaller team or don’t want to hire infra engineers just for agents, Anthropic’s story is compelling. If you’re a platform team that treats orchestration as a strategic asset, OpenAI’s more bare‑metal approach may suit you better.

6.2 Anthropic vs Google’s Gemini for Workspace / Apps

Google’s Gemini for Workspace demos emphasise:

- Embedded assistants in Docs, Sheets, Gmail, doing drafting, editing, light analysis and some workflow help.youtube+2

- Strong messaging around data privacy inside Google’s suite, but a relatively weak, public story on agent‑level infra for arbitrary workflows.

Anthropic’s stack, by contrast:

- Is much more developer‑centric: sessions, environments, MCP are clearly aimed at teams building agentic apps, not just end‑users tweaking email copy.kareemf+2

- Is weaker on integrated suite UX: Anthropic doesn’t own your mail, calendar or file storage; you have to wire in tools for everything.anthropic+1

From a DX perspective:

- If you want deeply embedded, narrow assistants inside office tools, Google is smoother.

- If you want general‑purpose, cross‑tool, long‑running agents you can embed anywhere, Anthropic’s model is closer to what developers actually need – if you’re willing to bet on a managed runtime.verdent+1

7. What devs, PMs, UX and AI marketers should actually do with this

The “why now” is straightforward: we’re finally past the chat‑bot era. Major vendors are converging on agentic workflows – but diverging on who owns the runtime.

Here’s how to use that shift well, depending on your role.

7.1 If you’re a developer or platform engineer

- Decide whether you want to own orchestration.

- If yes: bias towards OpenAI‑style SDKs or running Anthropic’s models behind your own orchestrator.kaggleyoutube+1

- If no: evaluate Claude Managed Agents seriously; the explainer plus early tutorials show enough detail to build a meaningful POC.aiblewmymind.substackyoutubemcpmarket

- Treat “environment” and “memory” design as core engineering work.

- Build least‑privilege environments: only the tools and network access each agent absolutely needs.anthropic+1

- Design scoped, auditable memory: what should persist between runs, and what must never be reused across customers or contexts?aimaker.substack+1

7.2 If you’re a product manager

- Stop promising “magic agents”. Talk in jobs and sessions.

- Force a cost and risk model from day one.

- Any agent that gets a long‑running container gets a budget, time cap and evaluation plan.

- Push your vendor for transparent session pricing, circuit‑breakers and monitoring instead of hand‑wavy “managed” language.findskill+2

7.3 If you’re a UX / content designer

- Steal Notion’s best patterns, not their slogans.

- Make agents feel embedded in existing workflows – task boards, docs, tickets – not tacked on as a chat window.youtube+1

- Use timelines, progress indicators and event feeds to explain long‑running work so users aren’t guessing.claude+1

- Design explicit “No” and “Not now” controls.

- Take the incident‑response example and make approve / reject flows visible and easy – not buried in a settings menu.mcpmarket

- Give users a clear way to pause or kill a run and show what that means for data and outputs.support.claude+1

7.4 If you’re an AI marketer

- Don’t ship pure vibes.

- The Cowork GA spot is fun, but developers desperately need what the “What is Claude Managed Agents?” video provides: architecture, examples, trade‑offs.claude+1

- If you’re launching agents, one asset in your campaign needs to be painfully concrete.

- Tie your stack layers together.

8. My take: Anthropic is winning the “honest agent DX” story – with real caveats

Across these four videos, Anthropic is doing something most competitors still avoid:

- Showing the actual agent lifecycle, from triggers to tools to evaluation to human approval.mcpmarket

- Naming the primitives developers will be living with for the next few years: agents, sessions, environments, memory, outcomes, co‑ordination.claude+1

That’s good news if you’re tired of vague “copilots” and want real building blocks.

But let’s not pretend this is effortless:

- You’re outsourcing a critical piece of your architecture to a single vendor.lowcode+1

- The security, cost and governance stories are still under‑explained in the public marketing, and you’ll need to interrogate them properly.lowcode+2

- Agents are still brittle. Long‑running autonomy amplifies both good and bad choices in prompts, rubrics and tool design.blog.bytebytego+1

If you ship on Claude Managed Agents, you’re making a strategic bet similar to “we’re all‑in on AWS Lambda” a decade ago. It might absolutely be the right call. Just treat it as such, not as a trend you can lightly reverse later.

Footnotes / sources

- Anthropic – “Claude Managed Agents: get to production 10x faster”.claude

- Anthropic – “Claude Managed Agents overview” API docs.platform.claude

- Anthropic – “Scaling Managed Agents: Decoupling the brain from the body” engineering blog.anthropic

- Anthropic – “What is Claude Managed Agents?” explainer video.mcpmarket

- Anthropic – “Introducing Cowork” and Claude Cowork product page.claude+2

- Anthropic – Cowork GA short video.reddit

- Notion – “How Notion built with Claude Managed Agents” case study video and LinkedIn posts.linkedin+2youtube+1

- OpenAI – Agents SDK tutorials and multi‑agent examples.youtube+1kaggle

- Google – Gemini for Google Workspace demos and reviews.youtube+2

- Third‑party explainers and reviews of Claude Managed Agents.aiblewmymind.substack+1youtubeverdent+3

- Guides on designing agent workflows and governance.elements+3